Problem: Email can take up too much of our time

Solution: Use text summarization and analysis to read the important parts

Every day, the average email user is confronted with a massive amount of email, most entirely generated by a computer. Faced with this avalanche, users can either wade through each piece of loquacious mail themselves, or ignore them en masse, missing the useful parts of automated emails like shipping confirmations and critical changes to accounts, as well personal messages from actual people they care about. With the practical application of summarization technology, the tide can be stemmed and email can become useful again. Courier aims to make email more meaningful by extracting the useful bits into tidy summaries that are smartly organized and a joy to read.

Analyzing an Email Message

Our NLP pipeline at Courier is composed of three main processes:

Email Parser

In theory, an email is a structured text object. In theory. Because in practice, there are a lot of twisted variations in the structure of a raw message that make it very hard to implement a general parsing strategy to extract the main elements of an email: headers (to, from cc, date, subject), salutation, main content, threads, signature, etc. This is exactly our first component: an email parser that analyzes a raw message to get its main relevant content.

Email Classifier

Once we are able to extract the important elements of an email, including its plain text (or HTML content), we need to classify what type of message it is in order to apply the best summarization strategy. This second component is an email type classifier. First of all, it is in charge of detecting the language of an email (we only work currently in English). After that, it classifies the email into one of two categories: Conversational or Botmail. If the email is classified as Botmail, it applies a subclassifier to identify different subtypes, e.g., Purchases and Payments, Travel, Social, etc.

Email Summarizer

Classified emails are then summarized following different methods. All types of emails require an specific NLP preprocessing analysis, from simple ones, such as tokenization and sentence splitting, to more elaborate ones, including speech act classification or discourse relation extraction. Finally, NLP-preprocessed emails become the input of the third component, whose main role is to summarize and extract important information such as tasks.

Task Classifier

Our Task Classifier is a hybrid NLP approach that includes an ML classification module and a set of post processing heuristics. Hybrid approaches in NLP are those systems that include statistical and rule-based methods to solve a given problem. In our case, the inclusion of rule-based methods was necessary to improve the performance of the ML classification model, specifically on those borderline cases where it is difficult to decide if a sentence is placing an explicit request or not.

The general workflow of our Task Classifier is as follows:

- The input is an email previously preprocessed with sentence splitting, tokenization, named entity recognition and speech act classification.

- An ML Classifier analyzes those sentences previously tagged as Command/Request or Desire/Need by our Speech Act Classifier and decides if a sentence belongs to the class TASK or NON-TASK.

- A set of post processing rules is applied to analyze sentences classified as TASK, identify false positives and re-classify them as NON-TASK.

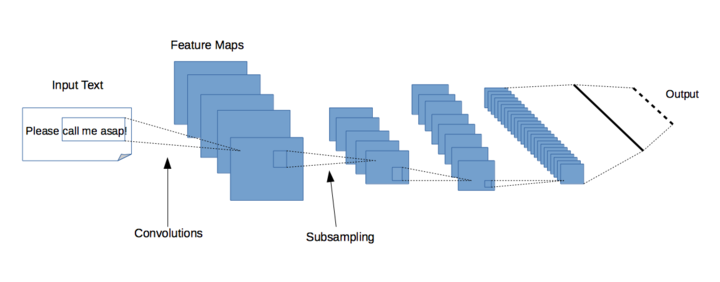

ML Classifier

The Task Classifier is a binary classifier implemented as a Deep Learning model using the Keras + Tensorflow combo. The current architecture that powers the Courier’s Task Classifier is the following:

- An Embedding layer with dense vectors of fixed size

- One Convolutional Neural Network (CNN) layer that generates 32 3×3 feature maps followed by a 2×2 MaxPooling layer

- One Convolutional Neural Network layer that generates 64 3×3 feature maps followed by a 2×2 MaxPooling layer

- A fully connected Dense layer

We were originally using a different architecture based on Recurrent Neural Networks (RNN) plus a layer of Attention, but we recently switched to the above-described CNN architecture, because it allows for classification of new, unseen data between 3 to 4 times faster.

Courier is a very complex system in which we apply many Machine Learning models to the data contained in emails in order to extract meaningful information. At Codeq, we spend a lot of time optimizing our workflow and experimenting with different combinations of Machine Learning algorithms, in general, and Deep Learning algorithms, in particular, to find the optimal balance between generalization power and processing speed.

Bootstrapping

We performed a round of bootstrapping in order to improve the performance of the original trained model. We used the original model to automatically analyze and classify a set of 20,000 unlabeled examples from the Enron corpus [1] that were previously classified by our Speech Act Classifier as Command/Request, Desire/Need or Task-Question.

The bootstrapping process runs the Task Classifier and selects sentences labelled as TASK or NON-TASK with a high confidence score. The selected sentences are then used to increase the size of the original train set. This iterative process resulted in a set containing a total of 14,313 sentences, including 7,104 TASK and 7,209 NON-TASK sentences.

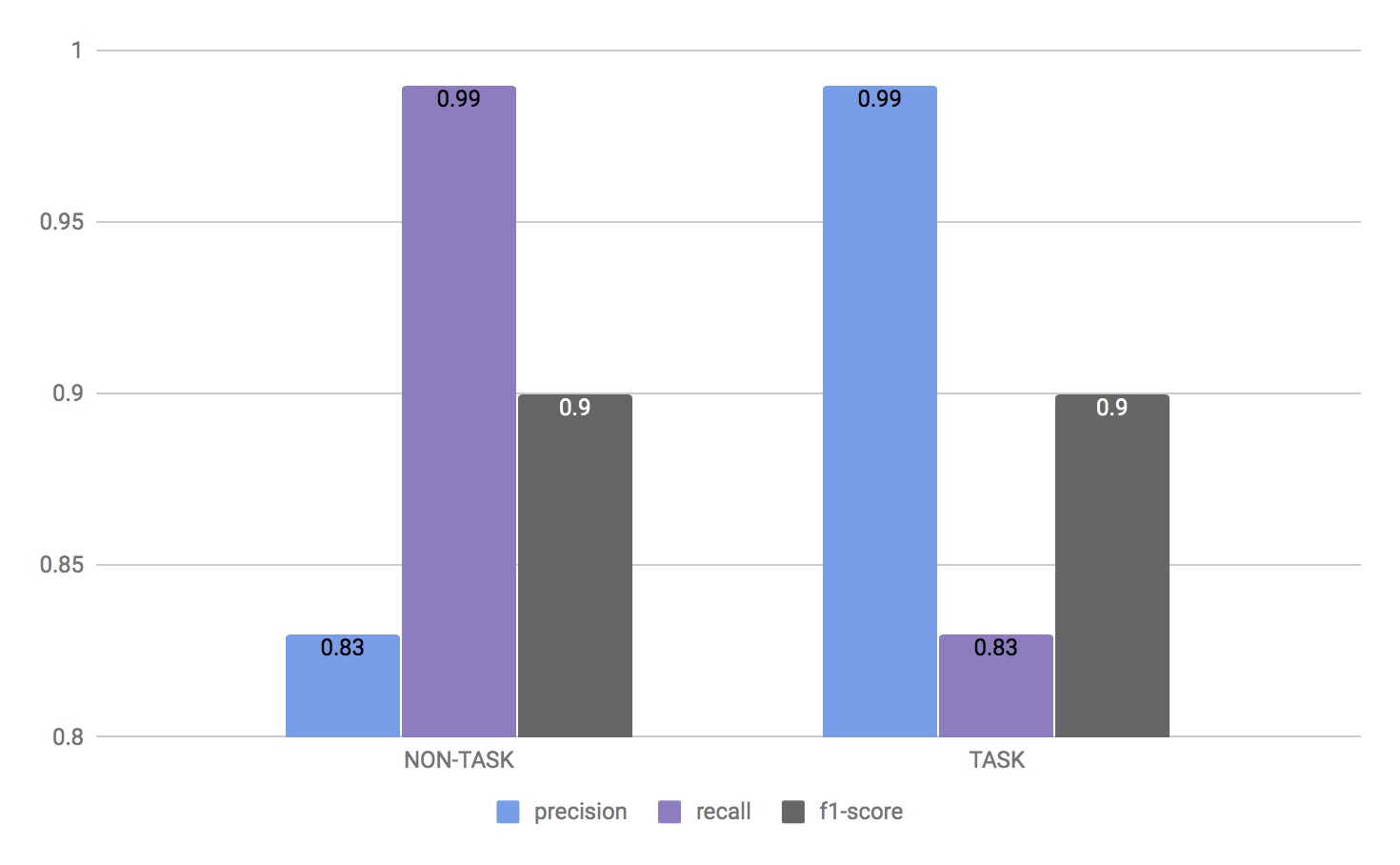

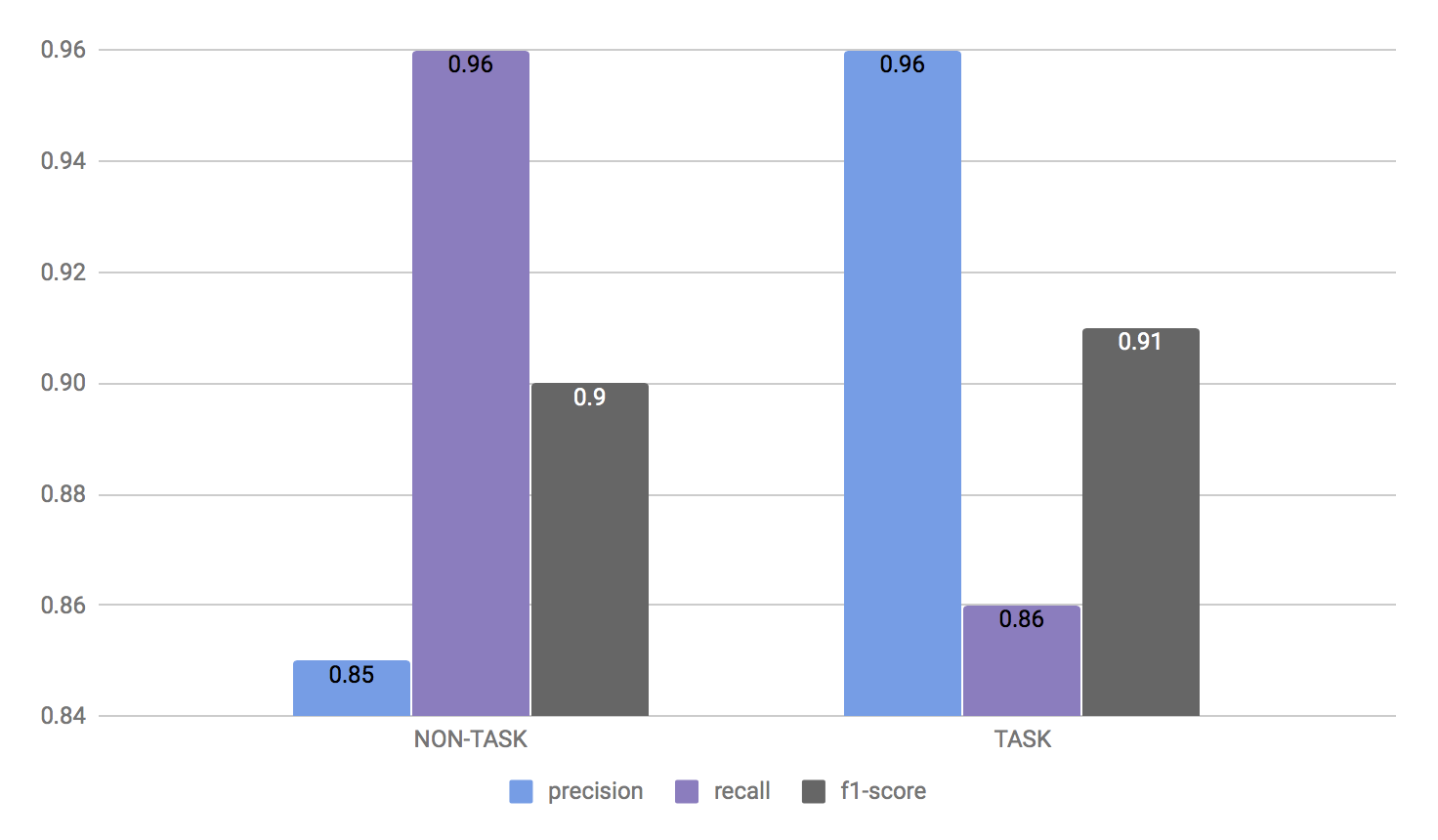

ML Classifier Evaluation

Evaluation on DEV corpus

Evaluation on TEST corpus

The charts above show the results of evaluating our final ML bootstrapped model on the test and dev corpora, using a probability threshold of 0.65 for class 1, that is, instances classified as TASK need to have a confidence higher than 0.65 in order to be assigned that label. Our resulting model has an overall good performance: a high precision and a reasonable good recall.

Post-Processing Rules

In Courier we are very interested in showing only good task sentences to end users. As we have described, in conversational emails there are many “linguistic, stylistic, pragmatics and polite variations” that make it difficult, even for human annotators, to decide if a sentence is requesting an obligation or not.

With this in mind, we decided to implement a set of post-processing rules in order to try to detect false positives, i.e., sentences that superficially seem to be tasks but are not placing any obligation on the recipient of an email.

We compiled a new corpus of 1250 sentences from the Enron corpus and our own inboxes that were already classified as tasks by the ML classifier. We used this corpus to find examples of the border line cases mentioned above, manually relabelled them as TASK or NON-TASK, putting special emphasis on requiring that the tasks were signaling an explicit and direct obligation. Finally, we used this set to manually implement a set of post-processing rules.

Unlike the ML classifier, the post-processing step is a pure rule-based approach which searches for specific patterns, for example syntactic or lexical. If the sentence previously tagged as TASK fits into such a pattern, the sentence is then relabeled as NON-TASK.

Some of the post-processing rules we developed are the following:

- “See Attached” filter. One of the common pleasantries to avoid retrieving as task are sentences of the type “Please see attached”. In this filter we try to identify cases that are not placing any obligation but only stating the presence of a given attachment.

- “Let me know” filter. We tried to avoid another common type of pleasantry when they don’t contain a clear obligation, for example “Just let me know”.

- Request for inaction filter. This filter reviews what are likely to be negative commands, request that the reader refrain from doing something, etc., and applies several criteria to decide whether such a sentence should still be viewed as a task, for example “Please do not forget to send me the document”

- Stop list of patterns. This filter searches for occurrences of some direct patterns that are commonly used in non-tasks sentences, for example “please (ignore|forgive) me for…”

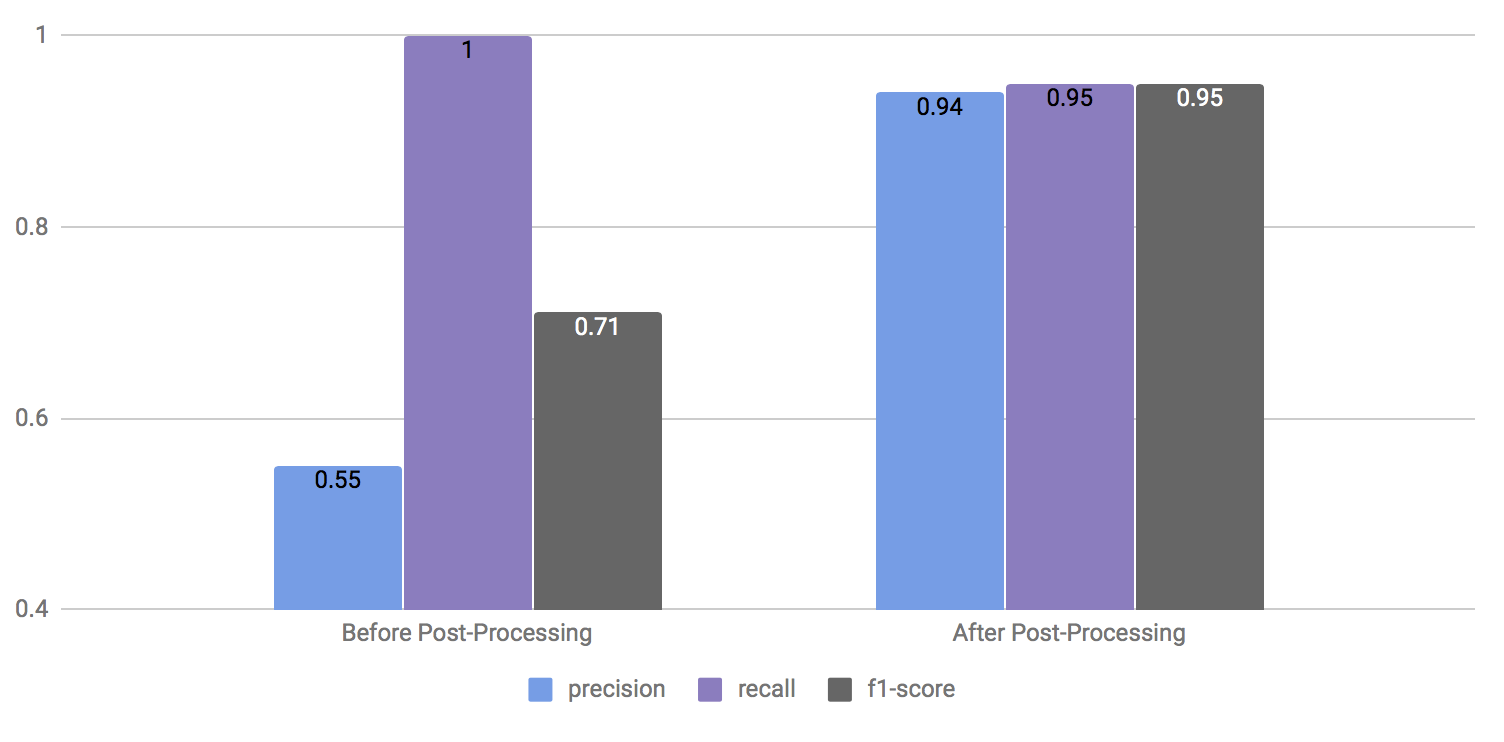

Post-Processing Rules Evaluation

In the chart above we can see the results of evaluating our system on a subset of the corpus previously described, before and after applying post-processing rules. These results show only the class TASK. Our goal, as we mentioned before, is to favor precision, which we were be able to obtain at the cost of losing some recall.

Conclusions

In this post we wanted to briefly show an overview on how we are automatically extracting tasks from emails. The identification of requests in emails can be seen as a text classification problem, and as in many NLP related tasks, the sole use of statistical or rule-based approaches is not enough to achieve high accuracy.

In our case, one of the main challenges we encountered was showing false positives as tasks, and the use of only an ML classifier was not enough to deal with this problem. We needed to implement extralinguistically motivated heuristics to try to avoid sentences with the form of a task but that were not being used to indicate a clear request.

We believe that there is still room for improvement in the automatic recognition of tasks, and we are doing our best to improve the quality of what the users can see in Courier. Try it out for yourself with our NLP API Demo.