Problem: Finding quality information in app reviews is difficult and frustrating

Solution: Use text analysis to derive more meaning from reviews

How do you translate a star rating? It’s an inconsistent system, subject to the interpretation of its reviewers. Review data is not well organized and easily represented to users.

App Store search parameters are lacking. You can only search by keyword and your results are subject to the what the proprietary algorithms of the app store deem most relevant. Although each app’s reviews are classified such that apps are rated on a generic star-based system, the volume of these reviews is overwhelming, and users are left with no options or technology to help them make accurate decisions about the apps they really want or need. For example, two or more apps belonging to the same category (e.g., productivity apps) can be equally highly rated yet differ significantly in certain aspects (e.g., their speed).

There are millions of apps available with hundreds of millions of reviews and you could be missing gems that are perfect for your needs.

The information living in app stores and similar platforms containing online reviews carries an increasingly important value when it comes to customer buying decisions. While modern search engines like Google and Bing strive to organize and lend accessibility to information on the Web, the bounded domain of app stores remains a platform where such engines do not operate. A technology that summarizes reviews would reduce the time invested in reading and also enable customers and manufacturers to consider the aggregate of reviews in nuanced ways, rather than merely depending on reading more individuals reviews.

CrowdChunk (CC) is deep summarization software that leverages the powers of sentiment analysis, linguistic socio-pragmatics (e.g., context of interaction, user intention and communication network), and topical and behavioral focus to offer a nuanced topic-focused summarization solution on app reviews.

Taking the discovery task off the shoulders of the customers and manufacturer, CrowdChunk employs cutting-edge natural language processing (NLP) technologies to help organize information in the modern digital store. The mission of NLP as a field is to teach machines to understand and generate human language.

CC deploys sophisticated Machine Learning algorithms and natural language processing technologies to analyze app reviews and detect prototypical statements that condense reviewers’ aggregate perspective on a set of curated topics (aka app personality traits).

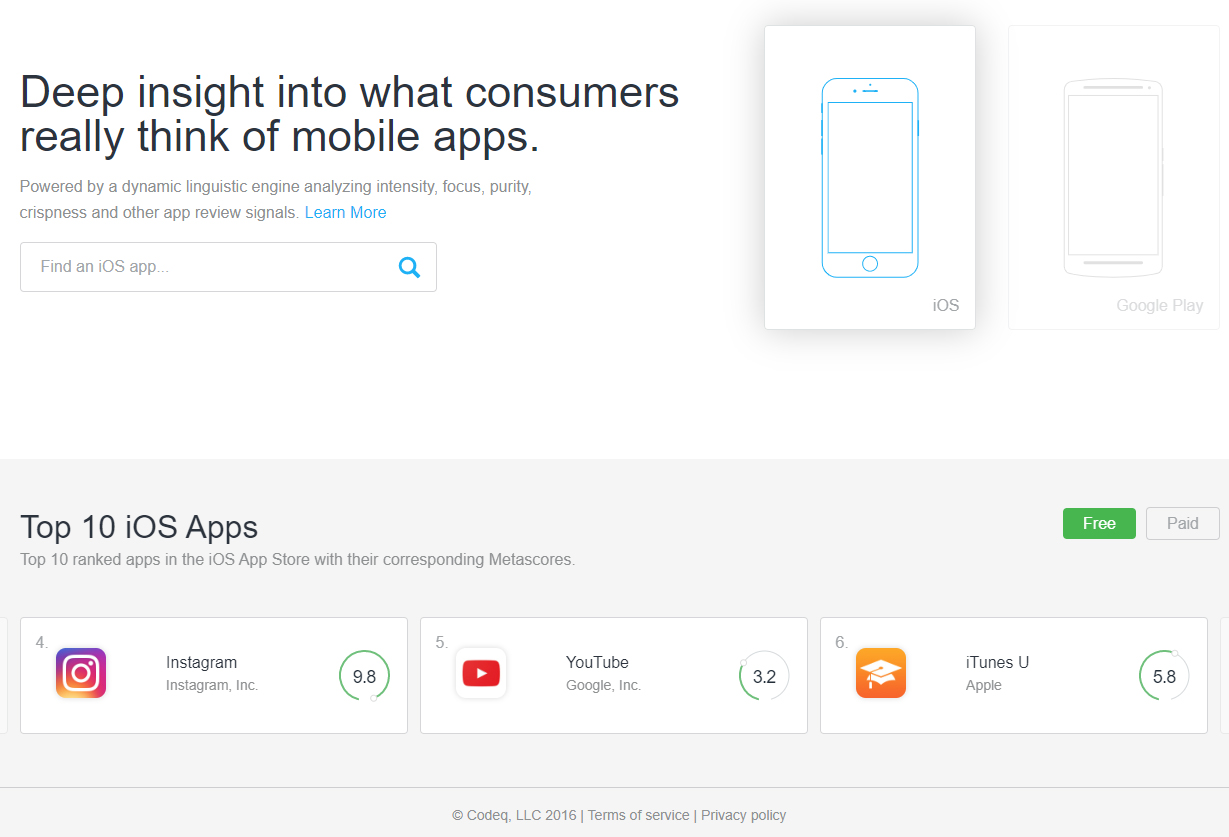

CC finally calculates a collective ‘Metascore’ of the sentiment expressed on those topics, which we present to users along with the individual scores on the different traits in an appealing and easy to read visual form.

At Codeq, we have worked hard to deploy an effective solution to accomplish all these tasks.

The first part of this solution is focused on building a state-of-the-art sentiment analysis system for the app review domain. Our research shows that most utilized sentiment detection tools in the market are not fully effective. In fact, the vast majority of such tools are rudimentary due to the many challenges facing development of highly efficient sentiment analysis software. Faced with the fuzziness and context-dependence of the very concept of sentiment, several tools that claim to perform sentiment analysis depend on word-matching with pre-compiled vanilla lists coming from non necessarily related domains. The danger of such an approach is that human language is intrinsically ambiguous, which means the sentiment of texts change easily according to context. For example, even though the word “fast” is positive, the overall sentiment of a sentence involving it could be negative (e.g., “it causes my battery to die fast”). Similarly, the negative word “crashes” is positive in the sentence “it never crashes.” These examples illustrate only two of the many difficulties faced in sentiment analysis: semantic compositionality in the multiword expression “dies fast” and negation in the phrase “never crashes.”

Domain specificity also challenges the development of effective sentiment analysis systems. A sentiment system built for a given domain will not work as successfully on other domains. For example, a sentiment analysis solution for the food industry will not be useful for mining app reviews. While lexical items such as “delicious,” “yummy,” and “fruity” are relevant to a food-domain sentiment system, these are not as important to app review domain models. Practically, what domain specificity translates to upon attempts to build a new sentiment analysis system is a need to carefully label non-trivial amounts of data with polarity tags like “positive” and “negative.” Since data annotation is time consuming and costly, many industries do not take such a route and hence end up using data already labeled from other domains. At Codeq, we have invested substantial effort in finding creative solutions to these and other sentiment-specific challenges.

Computational linguistics scientists are using NLP to extract the most important parts of review texts and organizing the compiled data in concise summaries (a task known as text summarization) as a way to save time for app store users via intelligent summarization of online product reviews, like those associated to apps.

Tapping into the wisdom of the app crowd and extracting meaning from more than 65 million reviews across two million apps is anything but simple—but not impossible. A solution is needed to elevate this untapped asset into a starring role in the ongoing confusion in app discovery.

Working with 50 million reviews, we have built sophisticated models that meet the challenges posed by multi-word expression and negation, but also tackle many other challenges (e.g., the linguistic neologisms, non-standard typology, and emojis characteristic of online language, sarcastic language). Our models feature semi-supervised learning, which is a class of machine learning algorithms that exploit human-labeled data expanded with considerable amounts of softly-annotated and/or non-annotated data. We have expanded considerable amounts of carefully-annotated app review data sets, allowing us to project human labels on more than half a million data points from which the machine learns.

Our models tease apart factual information from subjective or opinionated text pieces and employ statistical methods over huge amounts of data to learn specialized sentiment lexica. We also exploit different machine learning paradigms to detect sentiment intensity (e.g., deciding that “sort of efficient” is less positive than “damn efficient”).

The second part of our solution is devoted to building precise topic classifiers that, in the case of sentiment analysis, go well beyond the popular, but ineffective, word-matching solutions. In these models, we take word context into account and pay attention to language use subtleties that plain word-matching solutions fail to explore.

CC applies state-of-the-art machine learning algorithms to classify statements as mentioning none or one or more personality traits. Personality traits are common topics that app reviewers mention when they leave feedback positively or negatively criticizing the applications they are reviewing.

Personality Traits

Enjoyment

- Statements classified as mentioning the Enjoyment personality trait might be reviewing an app as fun, enchanting, engaging, or forgettable and boring, depending on the direction of the sentiment expressed in that statement.

Presentation

- Statements that mention the Presentation personality trait review applications from the point of view of how beautiful, attractive, intuitive, and enjoyable their graphics, design and general layout are.

Performance

- Statements that mention the Performance personality trait discuss how well applications work, that is, if apps drain battery life, use excessive data or if they are slow and laggy.

Value

- Finally, statements classified as mentioning the Value personality trait review applications from the perspective of how their price correlates to the usefulness or enjoyment they bring to users, that is, if an application is totally worth its price or if it requires excessive additional purchases to reach higher levels or unlock more features.

Once all app reviews have been analyzed in order to extract opinionated topic-focused statements, CC generates personality traits-focused summaries of the aggregate opinion of all app reviewers.

CC explores the joined vector spaces defined by the concatenation of sentiment and specific topic vectors and finds the prototypical statements that are most central to each vector space. We call these statements “canonical statements” as they condense the aggregate opinion of all app reviewers about particular personality traits.

CC then proceeds to find the statements that are most similar to each “canonical statement” in order to find their support, that is the number of statements that convey a similar opinion about each particular personality trait.

In addition to highlighting the statements that better summarize the aggregate opinion of all app reviewers, CC calculates a global score, which we call Metascore, that ranks applications according to the degree to which app reviewers like an application. In order to calculate the Metascore, we first calculate a score for each individual personality trait by finding the ratio of positive and negative statements extracted from app reviews.

Subsequently, the personality traits scores are aggregated and combined with the overall star score that app reviewers assigned to each application.

Finally, this combined score is weighted using a confidence factor in order to reflect the number of reviews analyzed to derive it, so that applications with more reviews, thus with higher confidence in the score assigned to them, have more prevalence than applications for which fewer were analyzed to calculate their global Metascore.

CrowdChunk is deep summarization software, build with the Codeq API, that leverages the powers of sentiment analysis, linguistic socio-pragmatics, and topical and behavioral focus to offer a nuanced topic-focused summarization solution to an issue caused by vast amounts of unstructured data, app reviews. By deploying sophisticated Machine Learning algorithms and natural language processing technologies to analyze app reviews and detect prototypical statements that condense reviewers’ aggregate perspective on a set of curated topics, we can turn noise into actionable signal.